Have you ever listened to Morse code?

To most of us, it’s just a string of beeps. To someone trained, it’s a message.

AI feels like that right now. So much news, hype, anxiety, promise, and prediction pouring over us that many people have started tuning it out. That is understandable. It is also a mistake.

This is the first in what I expect will be a recurring series of reflections on AI at LMU. We are trying to approach it the only way I know how to approach important things here: thoughtfully, in conversation with our mission, and without pretending certainty where none exists. At a Catholic university shaped by the Jesuit and Marymount educational traditions, we have a responsibility to ask not only what a technology can do, but what it should do in service of our humanity and the common good.

Most people first meet AI through a chatbot. But the chat window is only one doorway into a much larger reality. AI is already shaping search, writing, coding, accessibility tools, fraud detection, and increasingly, cybersecurity. Stanford’s Institute for Human-Centered AI notes that generative AI reached population-level adoption faster than the personal computer or the internet. However you measure it, AI is no longer a side conversation. It is part of ordinary life.

Why universities should care

Higher education should care for many reasons. Let me focus on two.

First, our students are entering a world in which AI literacy has become an ordinary professional competence — closer to email or spreadsheets than to an elective skill. In a few years, not knowing how to use AI may feel a lot like not knowing how to use a browser.

Second, universities are unusually complex and unusually open. We are communities built on access, collaboration, research, and trust. That is one of our strengths. It also means we manage broad digital ecosystems: teaching platforms, residence hall networks, financial aid, payroll, research data, donor systems, vendor relationships, and thousands of connected devices.

Recent reporting makes the picture plain. The Chronicle of Higher Education found that more than half of campus technology leaders have already experienced at least one AI-generated impersonation or phishing incident, while only a small fraction have meaningfully updated their cybersecurity protocols in response. Inside Higher Ed reports that many institutions still lack comprehensive AI strategies — or even consistent student access to these tools.

In other words: higher education is responding, but unevenly, and often in public.

That is why LMU’s approach matters. We do not need to be reckless early adopters, and we do not need to be nostalgic holdouts. We need to be thoughtful, collaborative, and grounded — preparing students for the world they are entering while keeping the institution that serves them safe and steady.

The cybersecurity signal I want on our radar

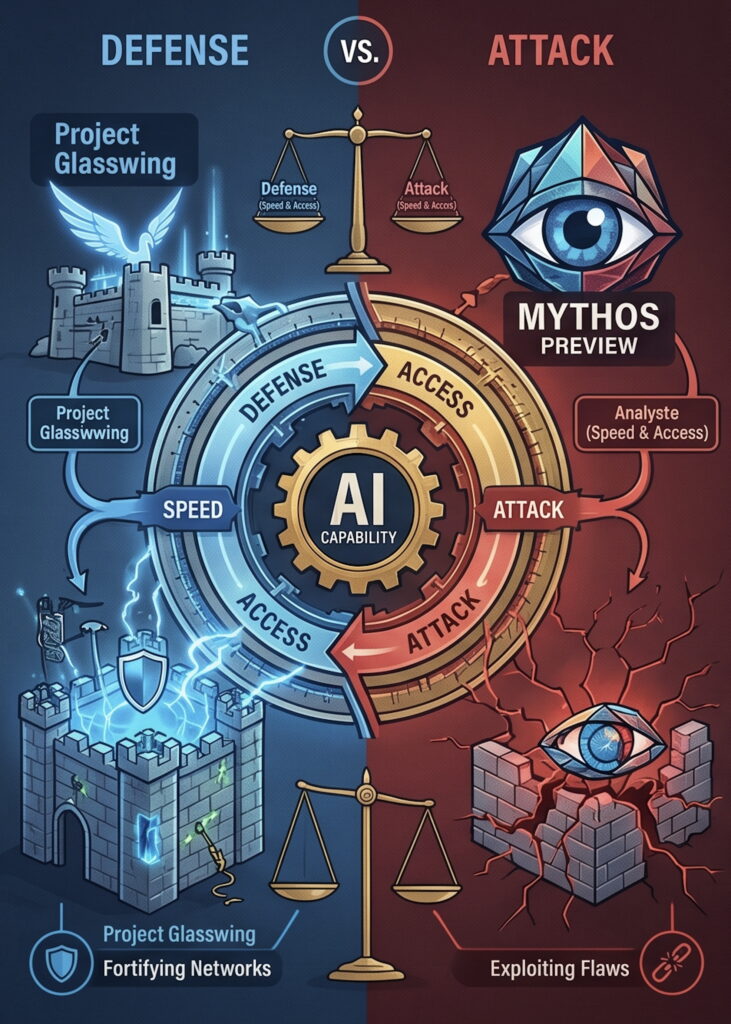

Earlier this month, Anthropic announced something called Project Glasswing. It is the specific development that prompted me to write this post.

Strip away the techy terms, and the point is simple:

AI is making both cyber defense and cyber attack more scalable.

A quick decoder ring helps. Anthropic is the company. Claude is its family of AI models. Mythos Preview is the especially powerful model at the center of this effort. Project Glasswing is the initiative built around using that model for defensive cybersecurity.

Those names sound like Silicon Valley wandered into a fantasy novel, so let me put it more plainly.

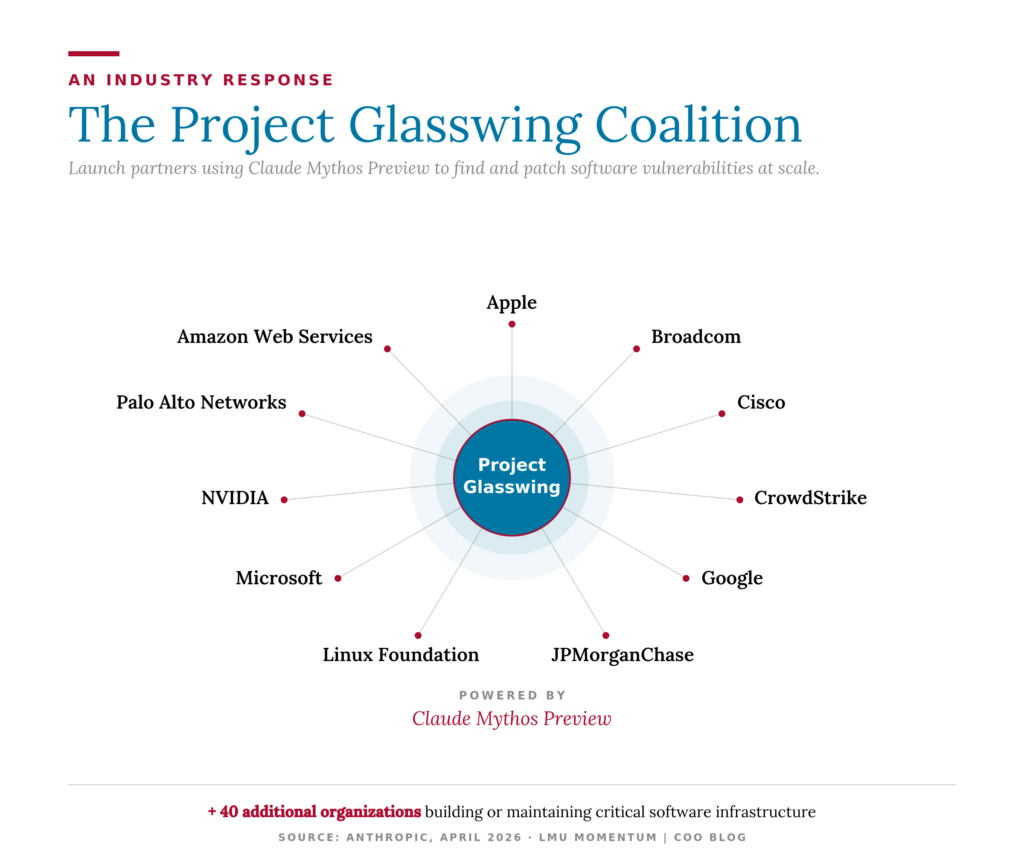

Anthropic says it has built an AI system so capable at finding serious software flaws that it is keeping access tightly restricted and putting it in defenders’ hands first. It is working with a coalition of major partners — including Apple, Google, Microsoft, AWS, Cisco, CrowdStrike, NVIDIA, JPMorgan Chase, and the Linux Foundation, among others — which tells you how seriously this is being taken.

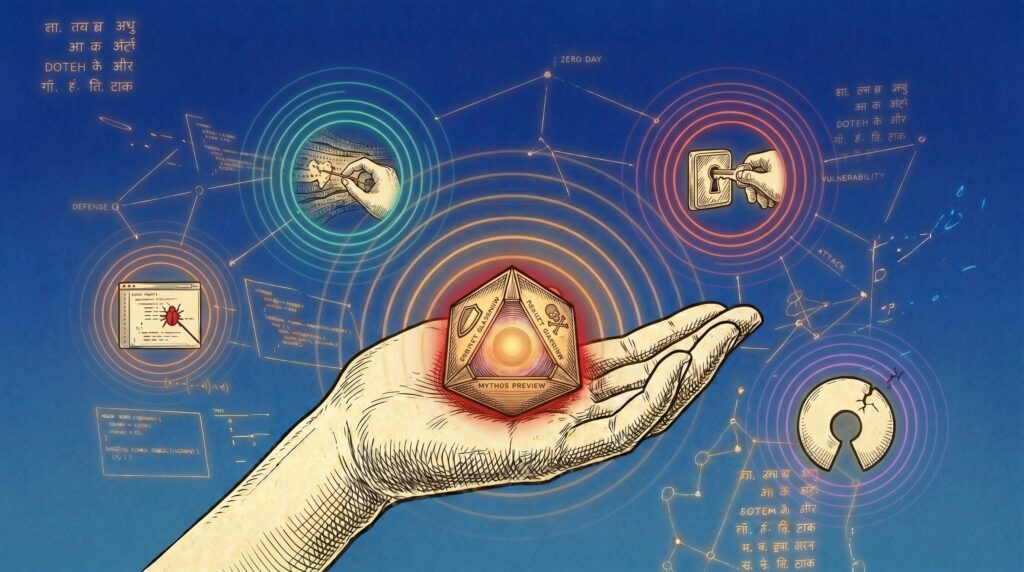

Anthropic says Mythos Preview has already identified thousands of serious, previously unknown vulnerabilities across every major operating system and every major web browser. A vulnerability is just a weakness in software that can be exploited. A “zero-day” sounds like a movie title, but it only means a flaw the owner has had zero days to fix because nobody knew it was there.

A flaw in a hardened system like OpenBSD is like finding a weakness at Fort Knox — something long considered battle-tested. Mythos turned up a flaw in OpenBSD that had been sitting there, undetected, for 27 years. A flaw in something like FFmpeg — the open-source library that quietly powers most of the video you watch online, from streaming services to video calls — is more like discovering an issue in the plumbing behind the walls. Most people never see it, but almost everything depends on it. Mythos found a 16-year-old bug in FFmpeg buried in a single line of code that automated testing tools had hit five million times without catching.

The names themselves are not the point. The point is that AI is getting better — quickly — at finding cracks in the digital infrastructure we all depend on.

That is the signal.

What used to take expertise and time is now taking less of both, and increasingly can be put into more hands, more quickly. Defenders can find and fix more weaknesses faster. Attackers, if they gain comparable capabilities, can hunt for weak points faster too. What once took months can now happen in minutes.

Here is the part worth sitting with for a moment. The same tool that helps defenders patch holes can, in different hands, help attackers find them. Right now, the strongest versions are on a short leash — Anthropic chose not to release this one publicly precisely because it is so good at what it does. But that kind of gate does not hold forever. Capability tends to spread. Anthropic itself has said we should prepare for a world in which tools like this are widely available within the next year or two.

That is the math behind the urgency. Not panic — preparation.

This is where it gets real

Some of you reading this have probably already brushed up against an early version of what I am describing and not realized it. The voicemail that sounded like your boss but felt slightly off. The email from a “colleague” whose tone was a hair too smooth. The Zoom face that did not quite line up with the audio. These are not science-fiction scenarios. They are this year’s incident reports at peer institutions, and the technology that produces them is improving by the month.

A university is, in many ways, a small city with a research agenda. People live here, study here, teach here, work here, submit records here, conduct research here — and yes, click links here.

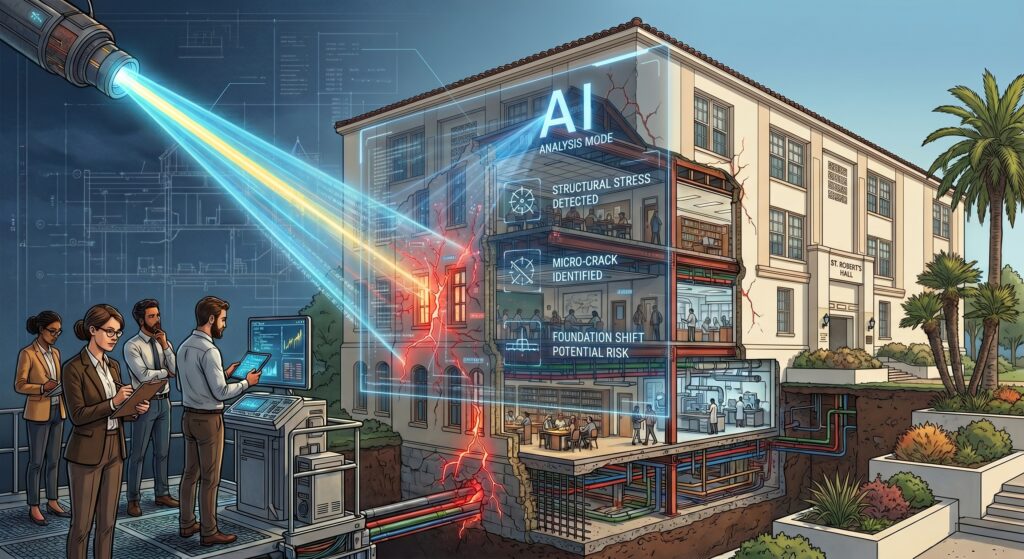

That means cybersecurity is not some distant technical concern. It is part of how we protect our community and keep the institution functioning.

It is also why tabletop exercises and process-tightening are not bureaucratic chores. They are the digital equivalent of locking doors, sitting behind airbags, and fastening seatbelts. You do not notice good security. You notice the day it is missing.

A reasonable question to ask after reading this far is what LMU specifically is doing about all of it. I am not going to itemize our defensive playbook in a public post — that would be its own small contribution to the problem. What I can say is that we take the work seriously, invest in it accordingly, and have leaders I genuinely trust to do it well. Tamara Armstrong, our Vice President for Information Technology Services and Chief Information Officer, and the ITS team she leads, handle the patching, planning, testing, vendor management, and quiet vigilance that nobody celebrates — until the day everyone is glad it was there.

Our response cannot be purely technical, of course. LMU is also building the broader literacy side of this work through university-wide conversations, the leadership of our faculty (including the new LLS Impact initiative at LMU Loyola Law School), the LMU Task Force for the Use of Generative AI, and a growing set of AI resources through ITS.

Better tools help. Better judgment decides.

Cybersecurity is a team sport

(I have another post where I’ll share video examples of how cloning and generative AI has progressed)

That does not mean everyone needs to become a security engineer by Friday. It does mean a few simple habits carry more weight than ever — because keeping our services and systems reliable will take all of us.

The strongest system in the world can still be undone by one rushed click.

Slow down the urgent request. If an email, text, or voicemail is trying to rush you — especially involving money, passwords, or sensitive data — pause. Urgency is one of the oldest tricks in the book.

Verify outside the original channel. If something feels unusual, do not confirm it by replying in the same thread. Start a new message. Call a known number. Ask a second human being. In the era of AI deepfakes, audio and video are now evidence, not proof. Are you sure that message actually came from me?

Use sanctioned tools and the basics without apology. Multifactor authentication, software updates, and approved platforms go a long way.

Be careful what you feed the chatbots. A free, public AI tool is not a private space. Do not paste student records, payroll data, donor information, research that is not yours to share, or anything else confidential into a tool that may store or train on what you give it. If you need AI to help with sensitive work, ask ITS about sanctioned options.

Report the weird thing. In cybersecurity, early reporting is not overreacting. It is community care. When in doubt, our ITS Help Desk wants to hear from you — helpdesk@lmu.edu or 310.338.7777. They would much rather hear about a false alarm than miss a real one.

The human work ahead

No university, company, or government has solved all of this. The internet will continue to supply confident people who say otherwise — often before breakfast.

What LMU does have is something more durable: a mission that asks us to pair innovation with conscience, speed with judgment, and technology with care for the whole person.

AI will change how we work. It should not change who we are.

So yes, AI is moving quickly. Yes, it is reshaping the security landscape. And yes, that requires vigilance. But the right response is not alarmism, and it is not denial. It is awareness, preparation, humility, and shared responsibility.

This will not be the last time I write about AI here. In future posts I want to dig into how we are integrating AI thoughtfully and mission-forward — in our operations, in our student experience, and in how we engage with one another as a community that is values-first.

For now, the signal I want us to hear is simple: AI is changing cybersecurity for defenders and attackers alike. The best response is to stay thoughtful, stay disciplined, and stay true to our values.

— John

P.S. A few useful resources

- Humanity’s Last Exam leaderboard

- The Chronicle of Higher Education — Campus Tech Team’s Views on AI

- Inside Higher Ed — AI strategy and student access coverage

- LMU Task Force for the Use of Generative AI

- LMU ITS AI Hub

- Anthropic Project Glasswing overview

- LLS Impact

- CISA cybersecurity basics

- FTC guidance on scams and impersonation

- AI-native search tools: ChatGPT Search, Google AI Mode, Perplexity

Discover more from LMU COO Blog

Subscribe to get the latest posts sent to your email.